VOICE INTERACTION DESIGN

and a cooking voice assistant for neurodivergent folks

Did you know…

30-40% of adults are thought to be neurodiverse?

This project came to be when I was in the kitchen cooking with my brother, and watching him struggle to perform tasks in order, on time, and with efficiency.

As a lover of all things tech -and especially voice interaction design- I set out to help improve his relationship with cooking.

2.21%

of adults are on the

Autism spectrum

4-5%

of adults have ADHD

5-15%

of Americans are Dyslexic

Meet the project

Alexa Show

Main character

Brother + neurodiverse folks

Target audience

Nicole Whiting

Sole designer: Voice, UX/UI

Timeline: 1 month

Brainstorming

I wanted to start brainstorming and spitballing ideas, so I set out to identify the user's needs via hypothesizing persona's stories and by performing quick guerilla style interviews with my brother.

Some of his biggest hangups in the kitchen were: 1. "By the time I'm hungry, I'm already needing to be eating -planning ahead is hard, so I need to have help with that stage." 2. "I get overwhelmed with all the things I need to do for a recipe, and then miss steps, and burn things."

From there, I wrote lists of the top level functions of what the app might be able to do.

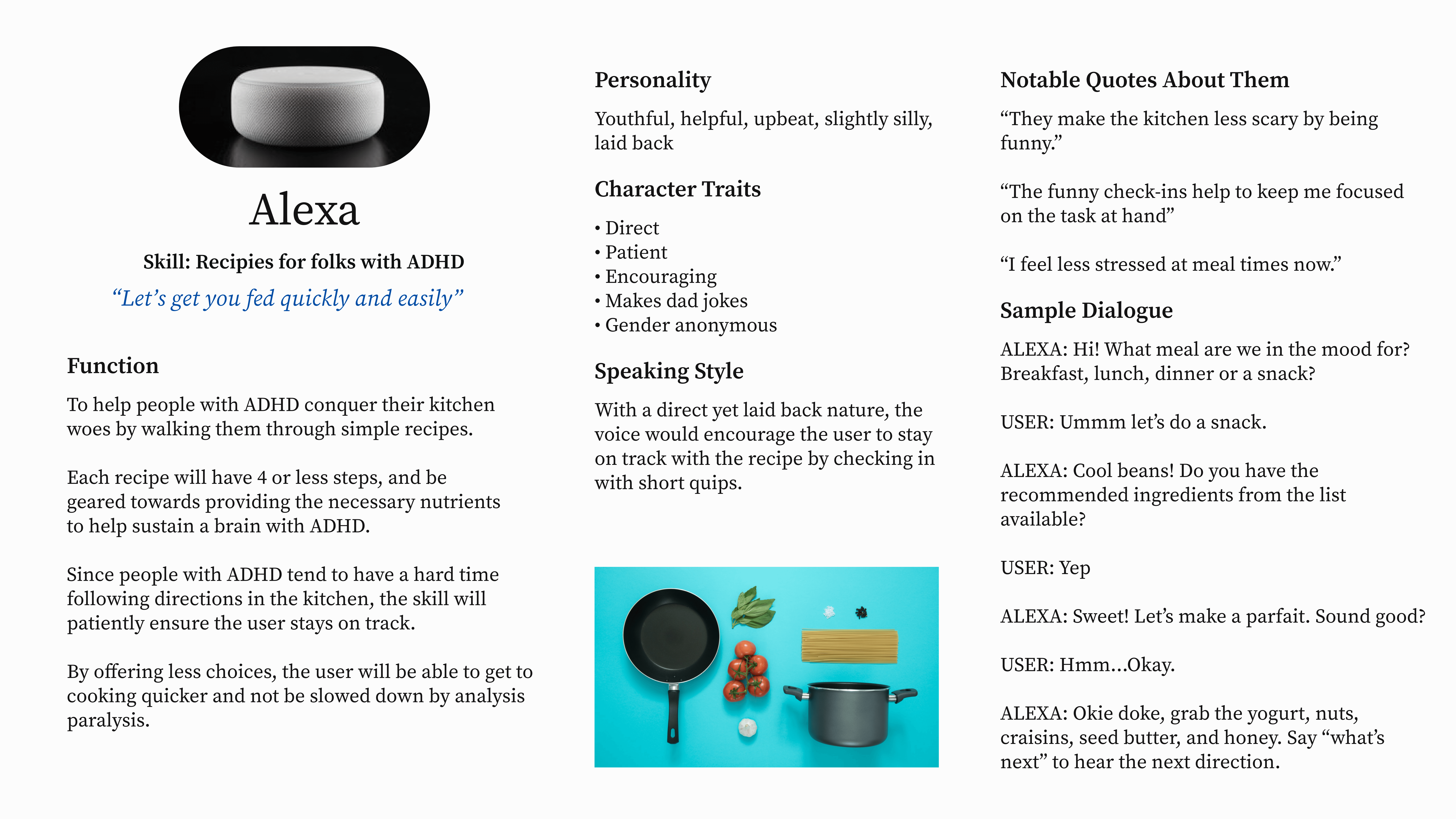

Personas

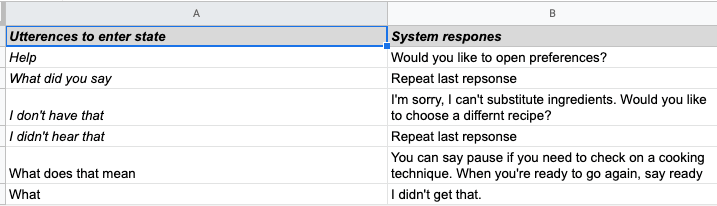

One neat thing about voice design is the creation of a persona for the voice assistant. Here we have the two personas. One for the user, and one for the voice assistant.

User flows

As a rule of thumb, mapping out user flows can be a challenge, and even more so when mapping voice interactions because there are so many possibilities you have to account for. This took many iterations.

Sample dialogs

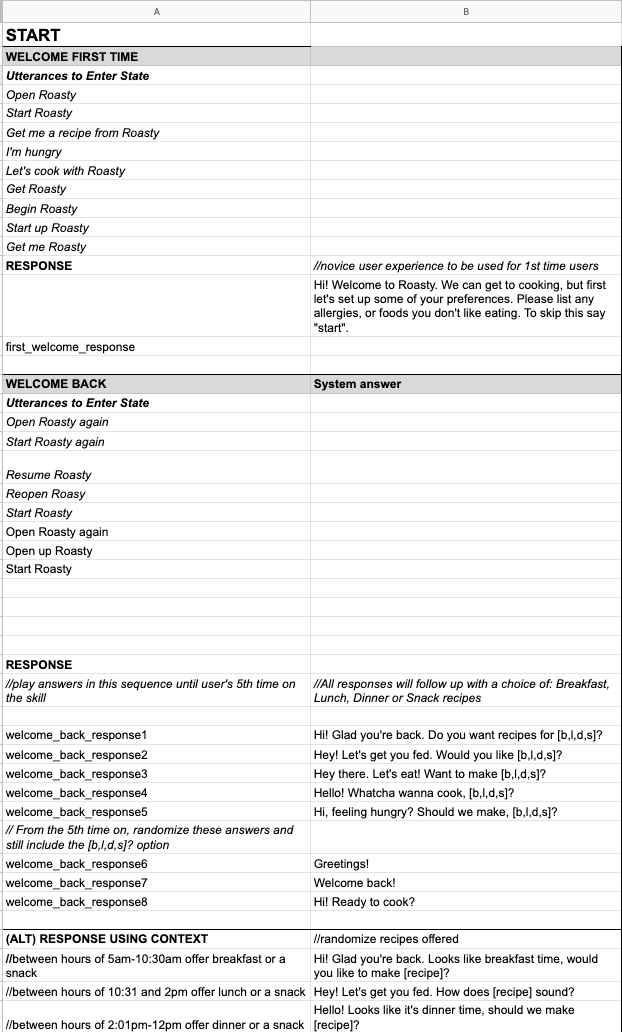

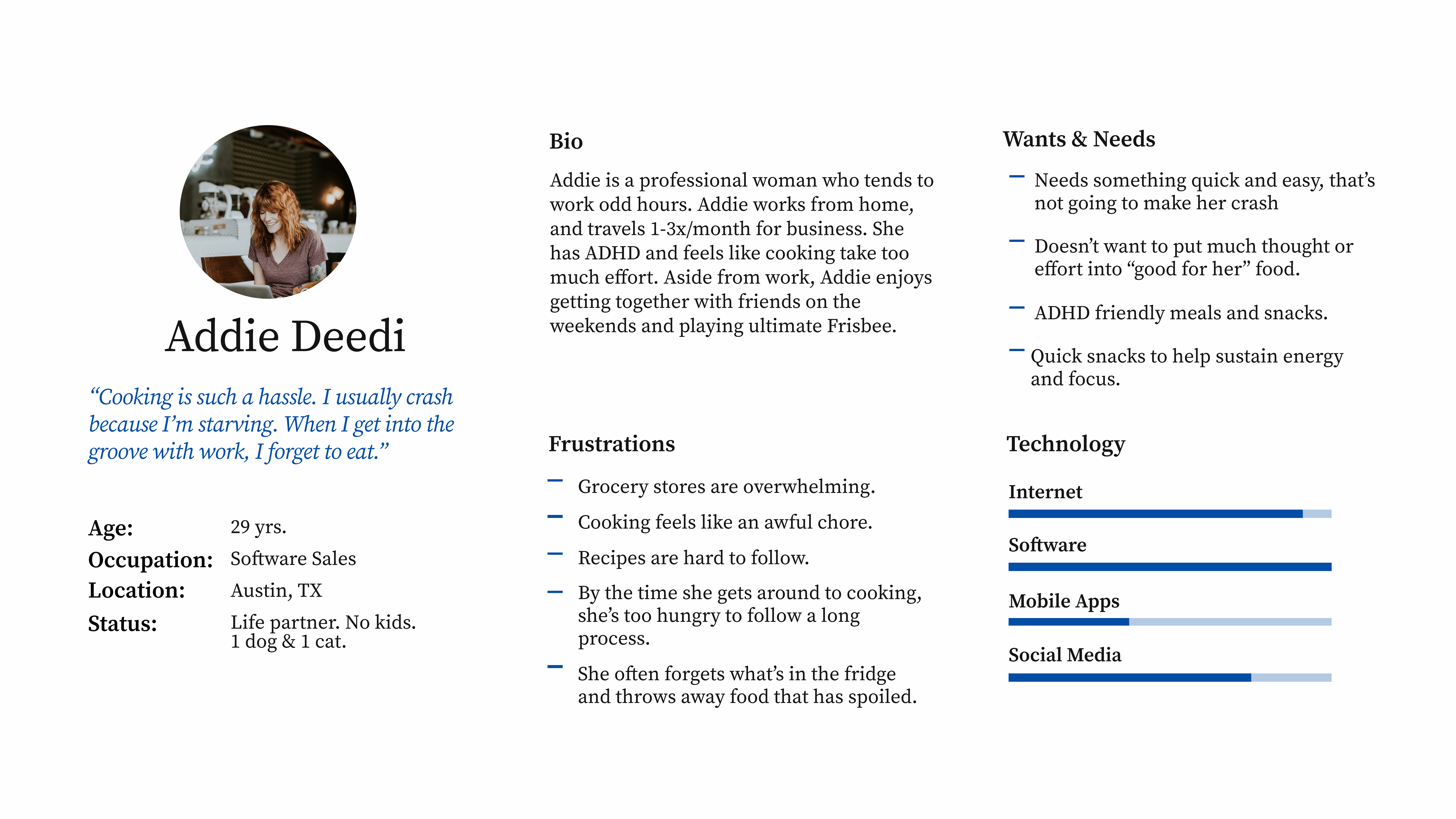

The purpose of these sample dialogs is to map out and document the basic interactions that users could have with home assistants.

This was one of the most important parts of the project as far as hashing out how the voice assistant would "act". I needed to figure out how the assistant would be perceived by the audience. I didn't want the voice to be too firm or authoritative sounding. Folks with ADHD often have what's called ODD or oppositional Defiance Disorder -so they have a tough time "being told what to do". In order for this to be successful, I needed to find a good balance of helpful and gentle. I opted to go with light and springy as the essence of the personality.

I wanted a laid back, straight to the point, funny, friendly assistant. In a perfect world, I wanted to the voice to be genderless preferably using the Q voice, which can be found here (IT'S SO COOL!)

In this first iteration, I was focusing mostly on the "sunny day cases". Obviously this scenario would be lovely, people just aren't that 'perfect'. Better to plan ahead for edge cases, and frustrated users. How will I handle ambiguity, and users in a loop?

In this set, I used the same dialog outlines and main ideas, while tackling more nuanced problems.

After making a list of edge cases, I settled on focusing on the "most-likelies" and landed here.

Scripts

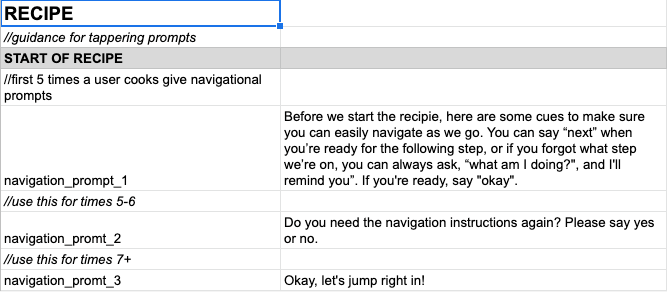

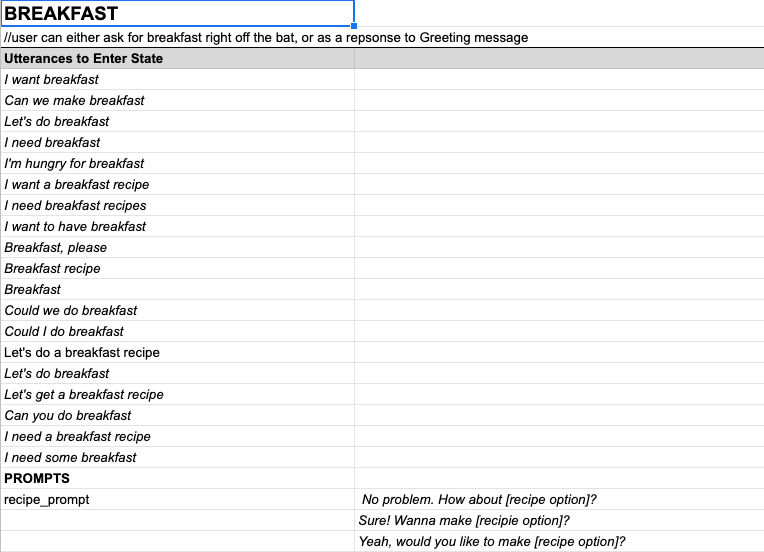

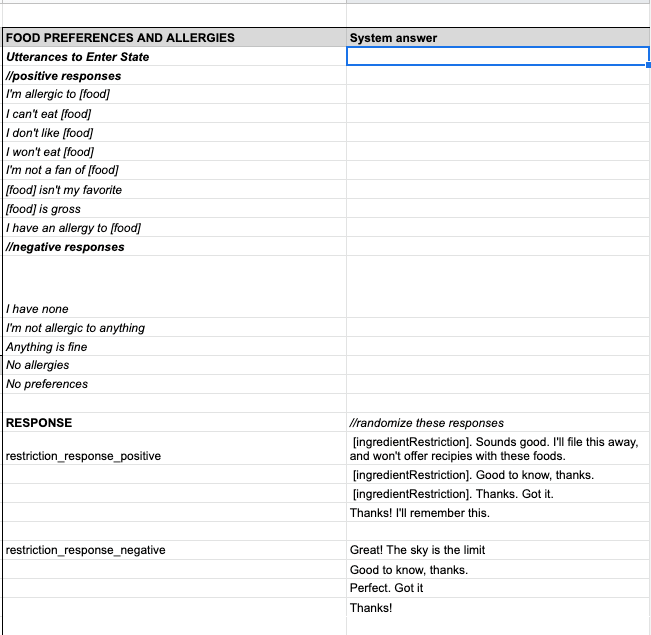

Here is where I kept a running record of scripts I would implement into the code frameworks. I worked in JSON for this project as recommended by Alexa Skill writing.

I included an alt response for the welcome message, as I would like to test both to see which prompt users prefer. For example: one alt response uses the context of time to offer a recipe during the “correct” meal time, e.g. time of day 11am=lunchtime.

The novice prompt asks users to list their allergies and food aversions.

At the start of each recipe, the system will give a quick explanation of navigational cues the user can use. As the user uses the app more often, the cues get shorter until there are none. Ideally, overtime, this would tapper off as the user uses the skill their memorability meter should get fed, therefore they will need less direction.

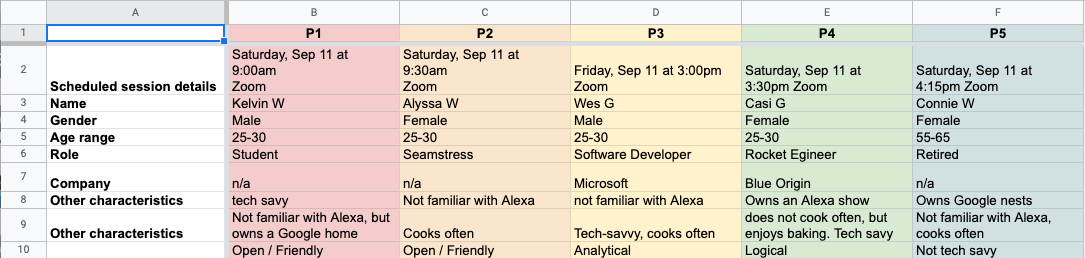

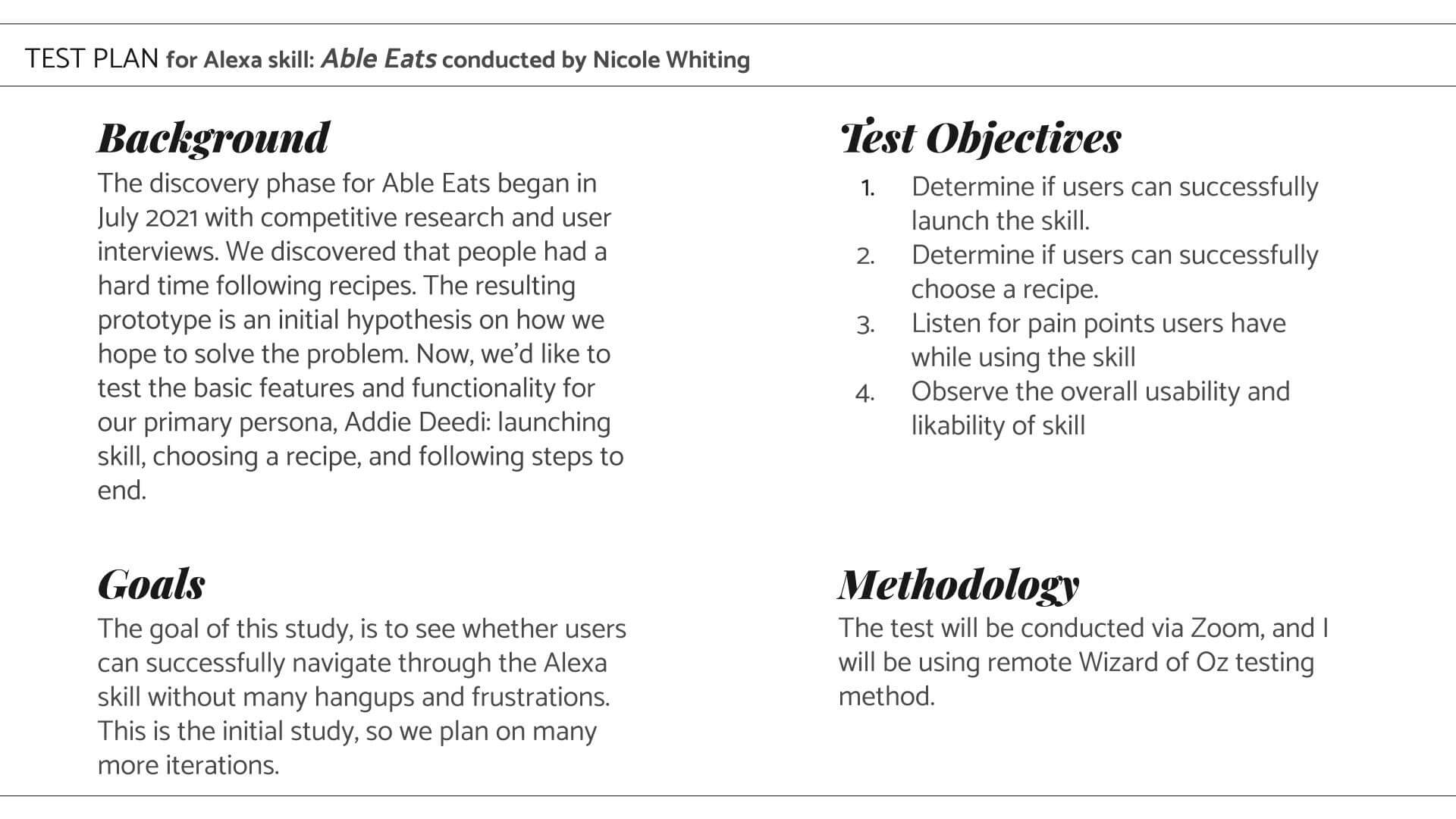

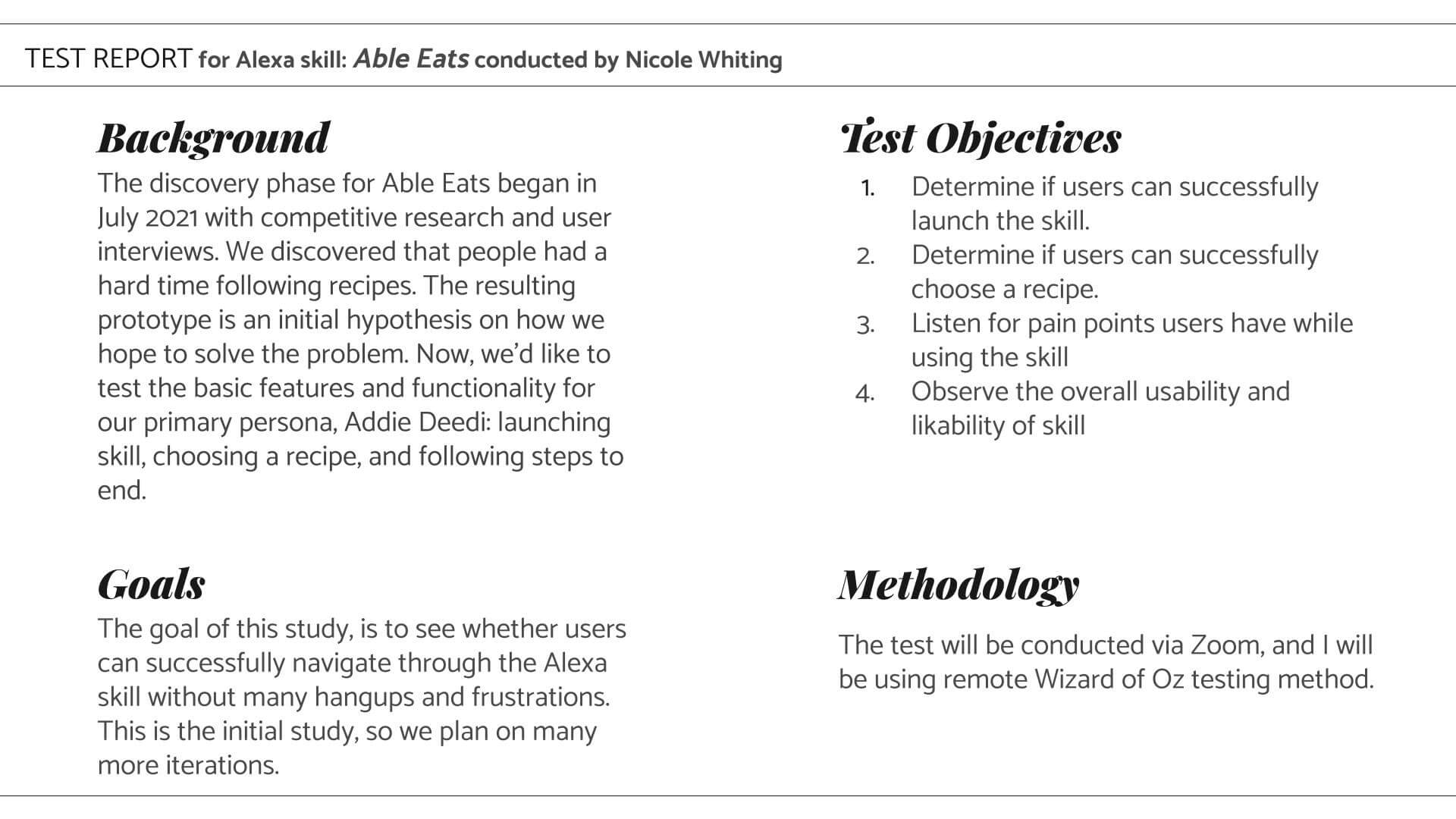

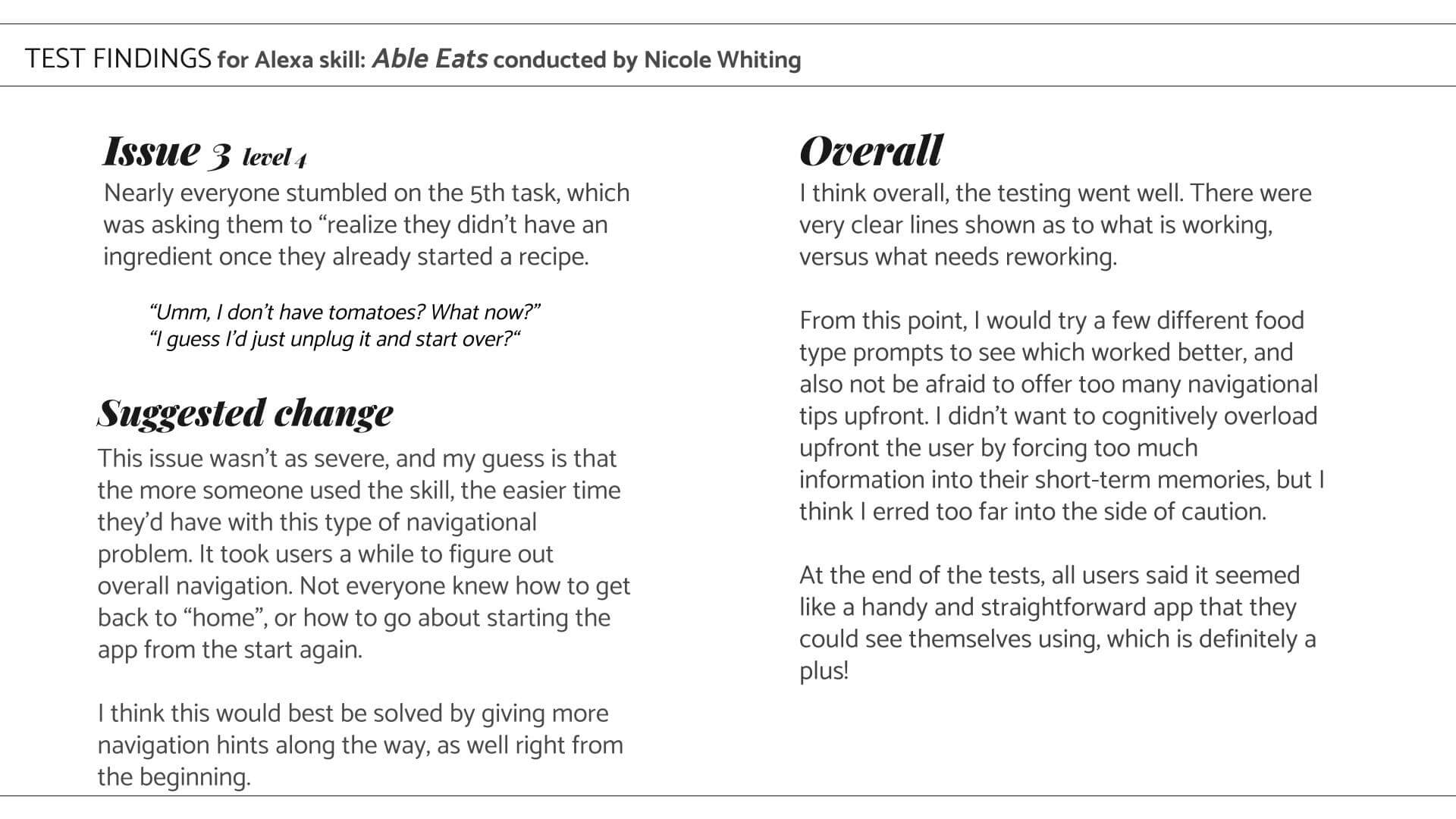

Usability testing

As with any UX design project, you need to do user testing. Because that is, after all, what makes for good usability. I reached out to my brother, who has been my go-to for all things with this project so far, as well as some others who fit the bill for the target audience.

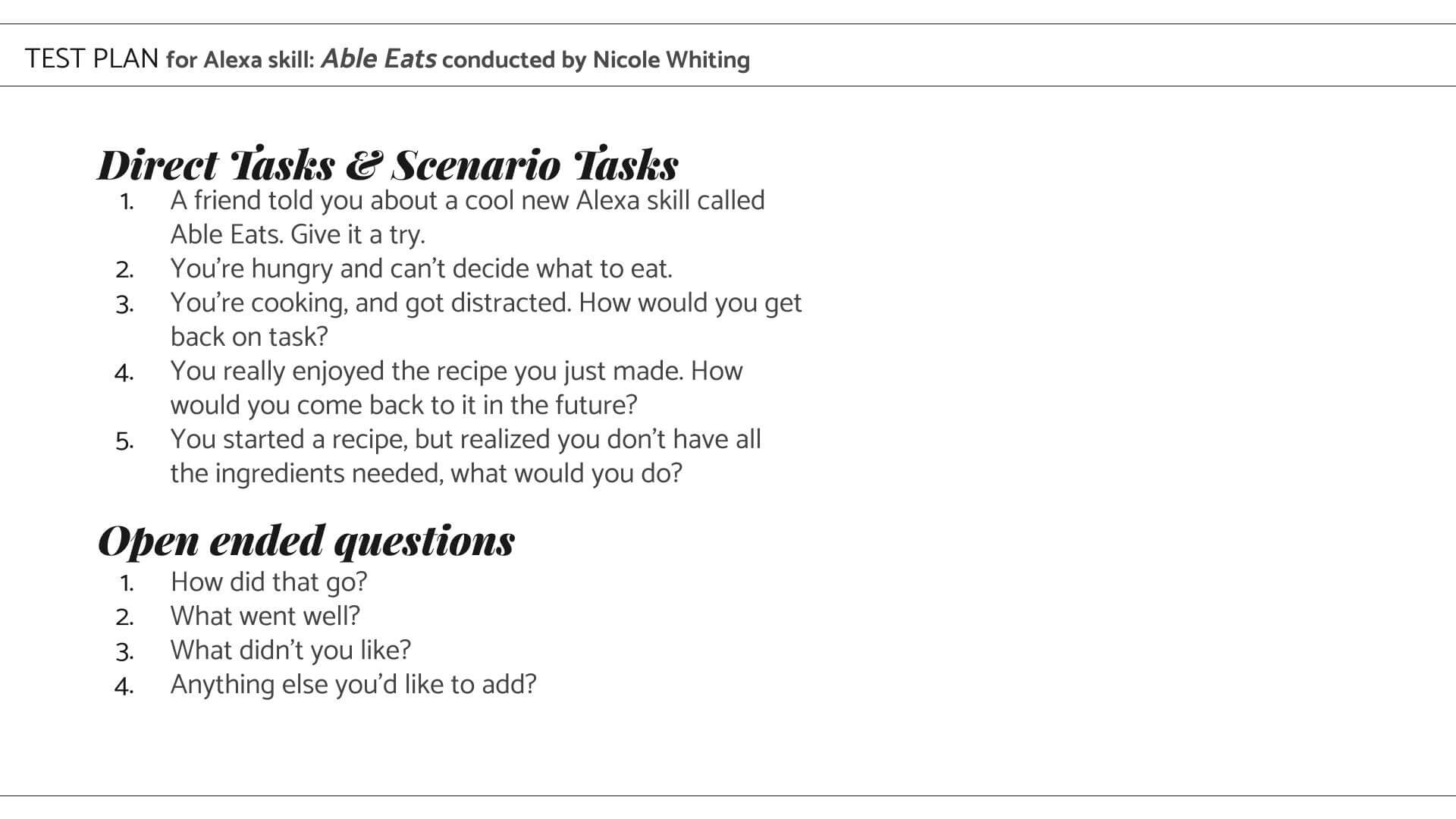

We did some Wizard of Oz testing, which is essentially role-playing. I was playing the part of the voice assistant, Alexa. The users were playing, well, themselves.

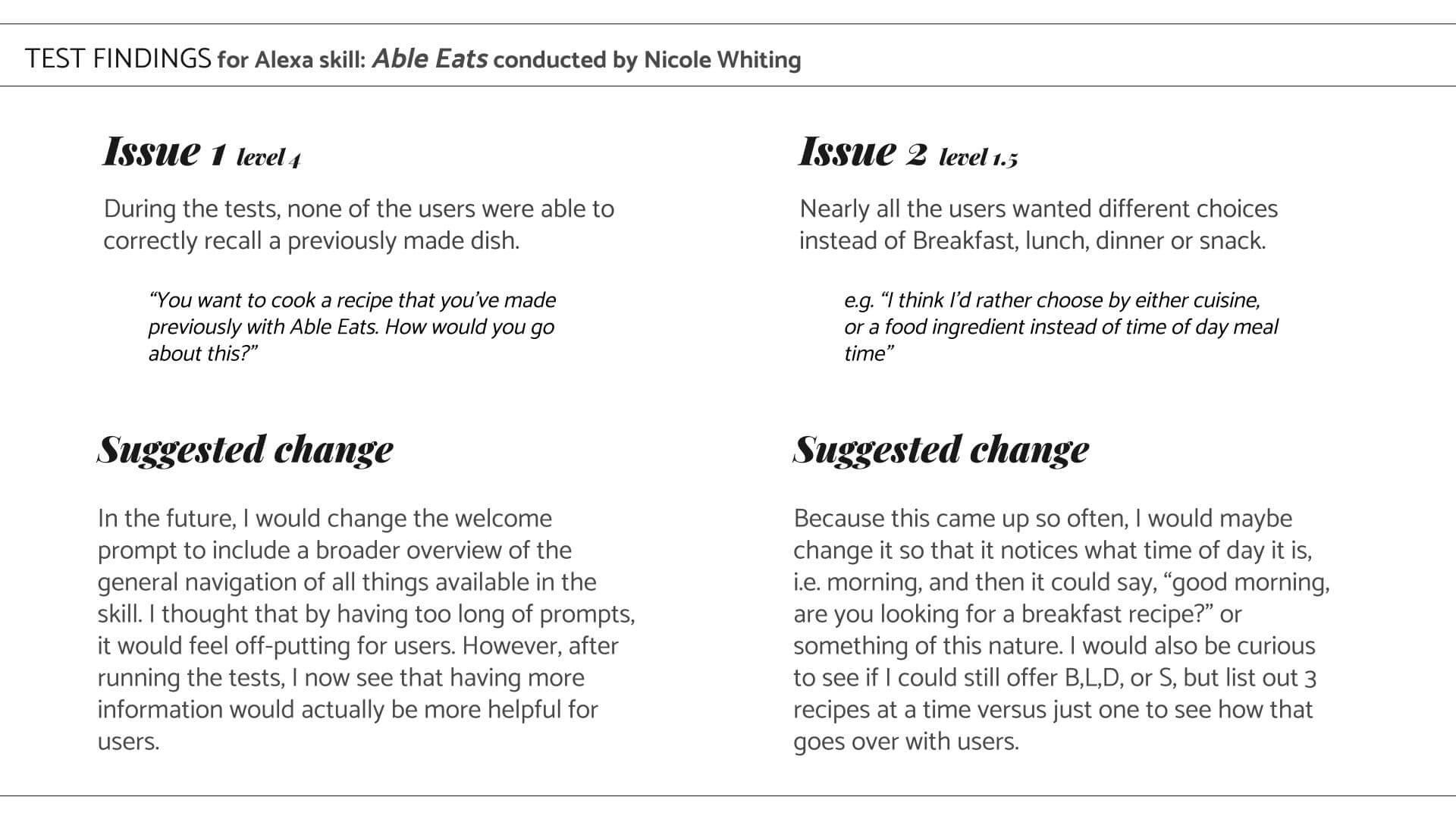

During testing, I found errors in my assumptions when writing the scripts. I also discovered how many of the users would navigate the app using only their voice -which proved to be difficult.

Oz

Test participants

Test Findings

Once the findings were implemented, I sent it into Alexa Skill team to have it reviewed and verified. As with most skills, it got kicked back to me (they are very strict --which is great!).

I actually decided not to put in the extra work to get this skill up and running because I think this idea would actually work better as a multimodal app to be used on a phone or tablet instead. During testing, nearly every user said, "this is great, but it would be much better with a screen, so I could read along and listen at the same time."

Conclusion

and next steps

This project taught me so much about voice interaction design, it's potential, as well as it's current pain points.

I'm super excited about the next steps for this app. I am planning to turn this into a multimodal app while still focusing on neurdivergent folks. My hopes are that together an app on a screen with a voice assistant will allow for more people to be able to cook with less stress, overwhelm and anxiety.

I plan to do more testing to find out some specifics for designing for ND people. There isn't too much research out there as of right now, so I'm putting together surveys and I have a few interviews lined up with medical professionals. From there, I will take what I know and head to the drawing board.

Stay tuned!